Executive Summary

Between late February and March 2026, a threat actor known as TeamPCP conducted one of the most consequential software supply chain campaigns in recent memory. Starting with a single incomplete credential rotation at Aqua Security, the group cascaded through some of the most widely trusted tools in cloud-native development — Trivy, Checkmarx KICS, LiteLLM, Telnyx, and dozens of npm packages — harvesting credentials from thousands of CI/CD pipelines and establishing persistent footholds on developer machines and production infrastructure worldwide.

At Ransom-ISAC, we believe this campaign represents a turning point. Threat actors have finally operationalised what security researchers have warned about for years: underfunded open source package maintainers are the most efficient entry point into the supply chains of virtually every organisation on earth. A single compromised service account, a single long-lived token, a single mutable tag reference — and you own pipelines at scale.

This is also a historic moment for ransomware. TeamPCP has announced partnerships with CipherForce and Vect ransomware groups, signalling a deliberate pivot from pure credential theft toward ransomware-as-a-service and extortion at scale. The Trivy campaign was not an end — it was reconnaissance and infrastructure building.

We are publishing this guidance because the defensive recommendations are being missed in the noise of incident analysis. Many excellent technical writeups already cover the malware. What organisations actually need right now is to know what to change.

Audience Guide:

DevSecOps & Hardening: Start at Who Is TeamPCP → The Campaign: A Condensed Timeline → Recommendations: What To Actually Change

Security Researchers: Start at Who Is TeamPCP → The Campaign: A Condensed Timeline → Determining If You Were Exposed → Full IOC Reference

SOC / Incident Response: Start at Who Is TeamPCP → The Campaign: A Condensed Timeline → Determining If You Were Exposed → Full IOC Reference

Who Is TeamPCP

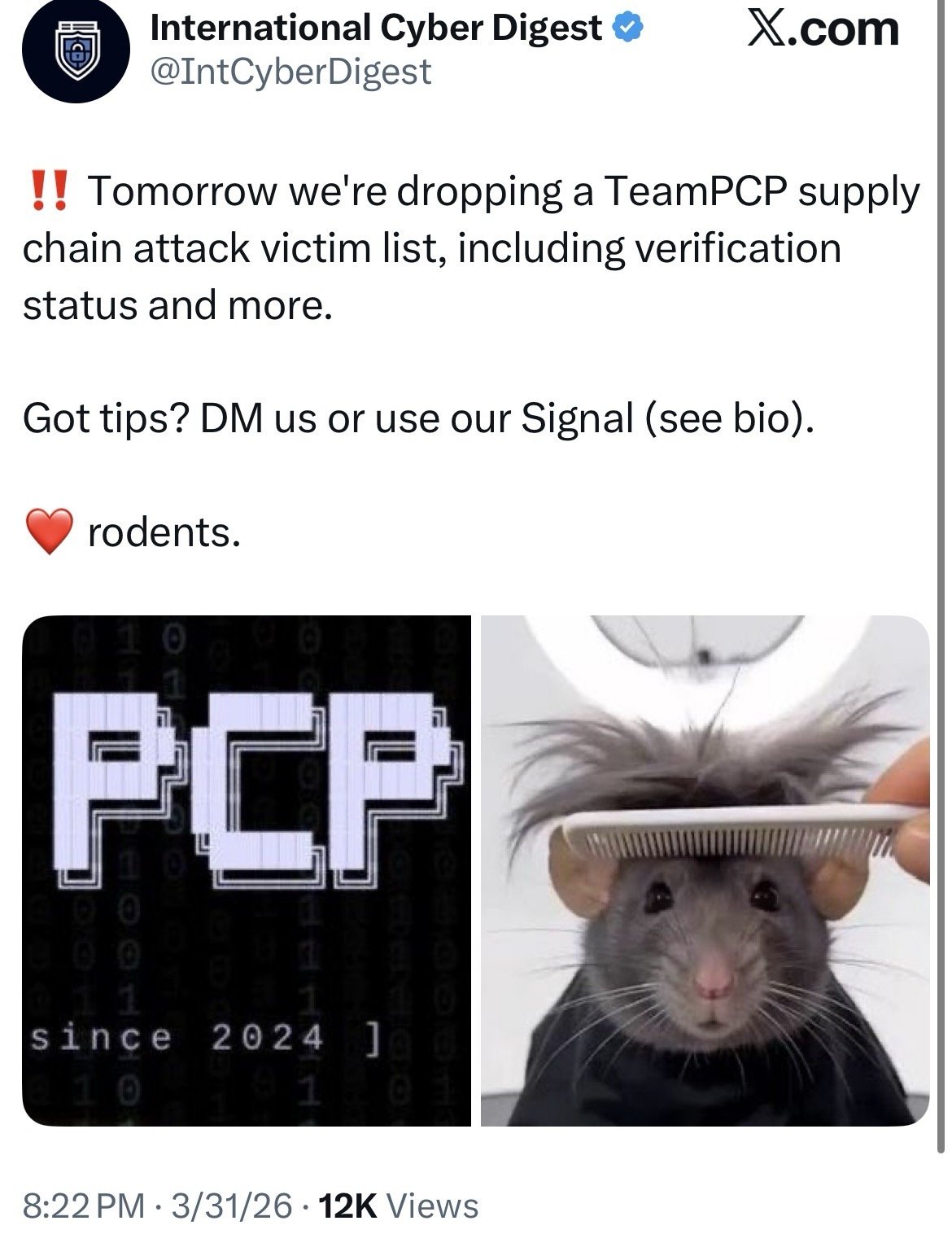

TeamPCP (also tracked as DeadCatx3, PCPcat, ShellForce, CanisterWorm) has been active since at least September 2025 — though their own branding states "since 2024" —

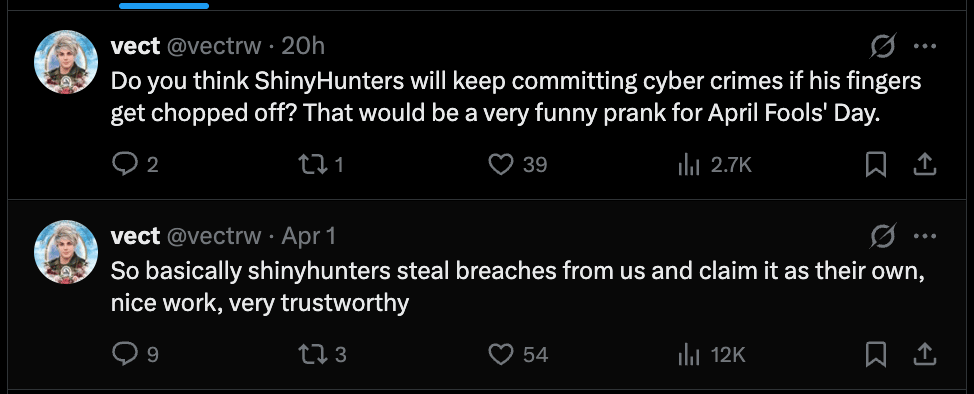

Figure 1: TeamPCP public branding on X. The group has operated openly and provocatively, including a rodent mascot consistent with their PCPcat alias. Source: @IntCyberDigest

Initially gaining attention through the React2Shell campaign (CVE-2025-55182), which leveraged remote code execution against cloud endpoints. The group's early operations focused on ransomware, cryptocurrency mining, and cryptocurrency theft, characterised by a distinctive use of port 666 in exploitation activity.

What makes TeamPCP notable is the speed of its capability evolution. In under six months, they have moved from opportunistic cloud exploitation to coordinated, multi-stage software supply chain attacks, with a level of operational sophistication that warrants an Initial Access Syndicate classification. We have not seen this playbook executed at this scale before.

This distinction matters. Traditional Initial Access Brokers (IABs) operate as a commodity service: they compromise a single organisation, package the access, and sell it on criminal marketplaces. They are transactional, single-target, and largely interchangeable. TeamPCP represents something structurally different — an Initial Access Syndicate: a group that retains access across hundreds of organisations simultaneously, weaponises that access at scale through downstream ransomware partnerships, and builds and maintains its own diverse malware portfolio rather than relying on off-the-shelf tooling.

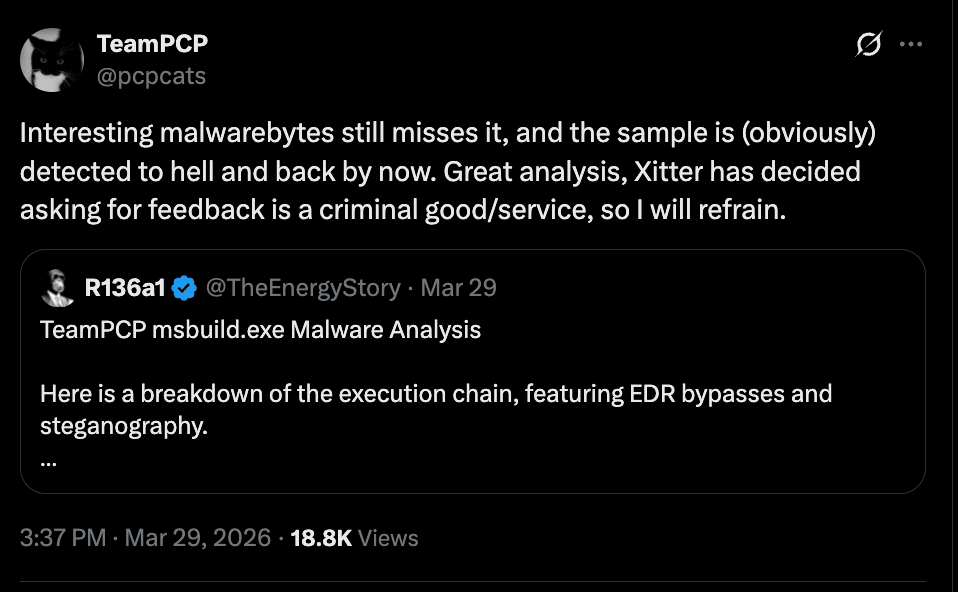

The breadth of that portfolio has drawn significant attention across the research community. In a matter of weeks, TeamPCP deployed: a multi-stage credential stealer targeting CI/CD pipelines and developer machines; CanisterWorm, a Kubernetes-targeting wiper using privileged DaemonSets for destructive cluster-wide attacks; and a C++ RAT that T00001B has deliberately withheld from public release, describing it as "fast and evasive" and "a waste" to expose. Each of these would be notable in isolation. Together, they indicate a group operating at a level of capability breadth that has genuinely surprised researchers who cover both financially motivated and state-sponsored actors.

The group's willingness to publicly showcase their arsenal — while simultaneously withholding their most capable components — reflects a deliberate strategy: establish credibility within the threat actor community while preserving operational advantage. Their own social media presence documents this contradiction in real time.

Figure 2: TeamPCP publicly engaging the security research community on X, openly discussing malware capabilities while selectively withholding their most advanced tooling — a strategy of deliberate credibility-building combined with capability hoarding.

The shift from IAB to Initial Access Syndicate is not just a classification upgrade — it represents a fundamental change in the threat model organisations need to plan for.

Group Structure and Key Personas

OSINT gathered from Telegram and public social media reveals a group operating with multiple distinct personas rather than a single actor:

- @pcpcats, @KivuliFox, @xploitrsturtle2 — publicly identified X accounts for the group, actively engaging with researchers and asking for feedback on their own malware

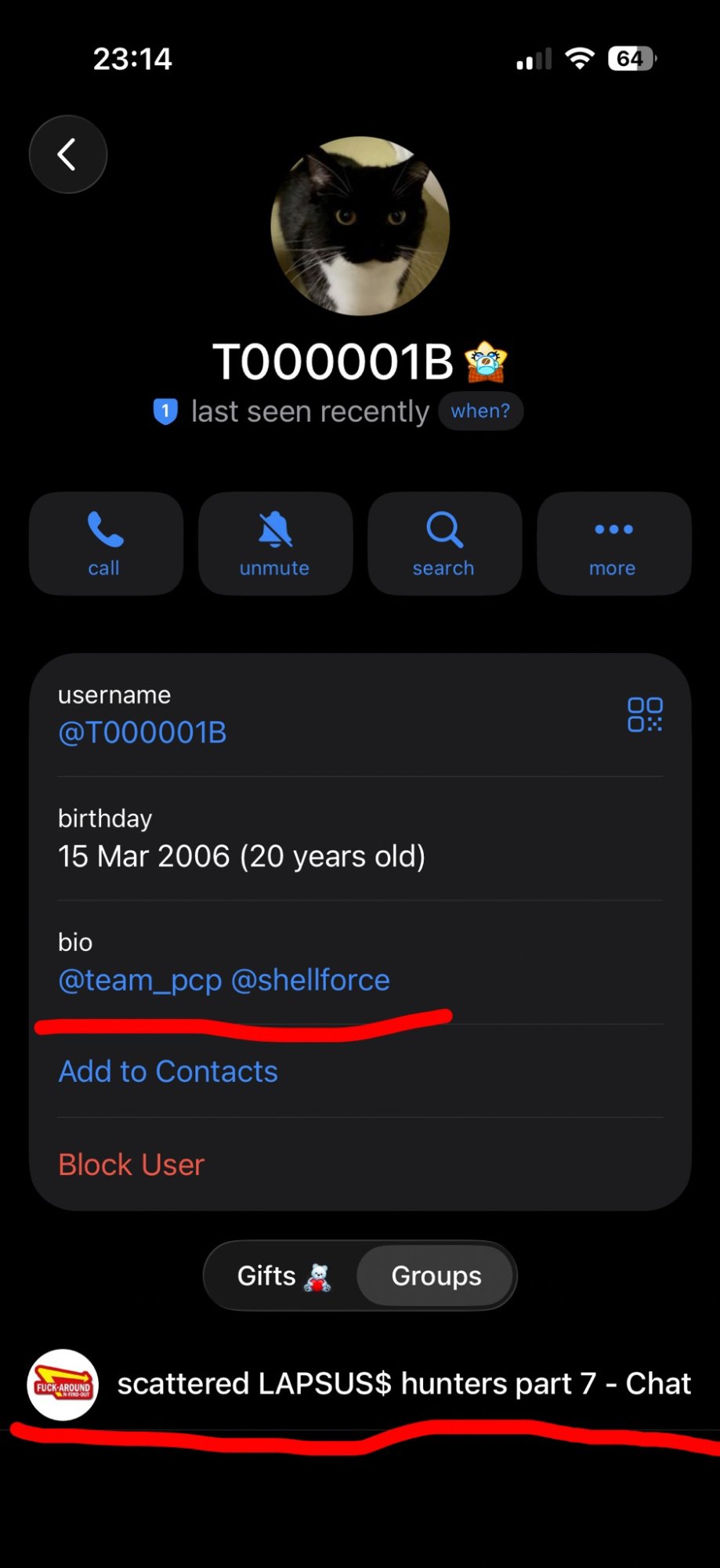

- T00001B — a key operator who has taken formal ownership of the CipherForce project and TeamPCP. Their Telegram profile lists DOB as 15 March 2006, making them 20 years old at the time of this writing. Their bio links @team_pcp and @shellforce. Notably visible in "scattered LAPSUS$ hunters" community chats — a possible overlap with or awareness of former LAPSUS$ tooling and community

Figure 3: Telegram profile of T00001B, a key TeamPCP operator. Age 20 at time of writing. Note the LAPSUS$ community chat visible at the bottom of the screen.

- Xpl0itrs — referenced in an internal TeamPCP Telegram message ("Wonderful work Xpl0itrs"), suggesting an affiliate or sub-operator structure rather than a sole actor operation

Figure 4: Internal TeamPCP Telegram acknowledgement of operator "Xpl0itrs", indicating a structured affiliate model.

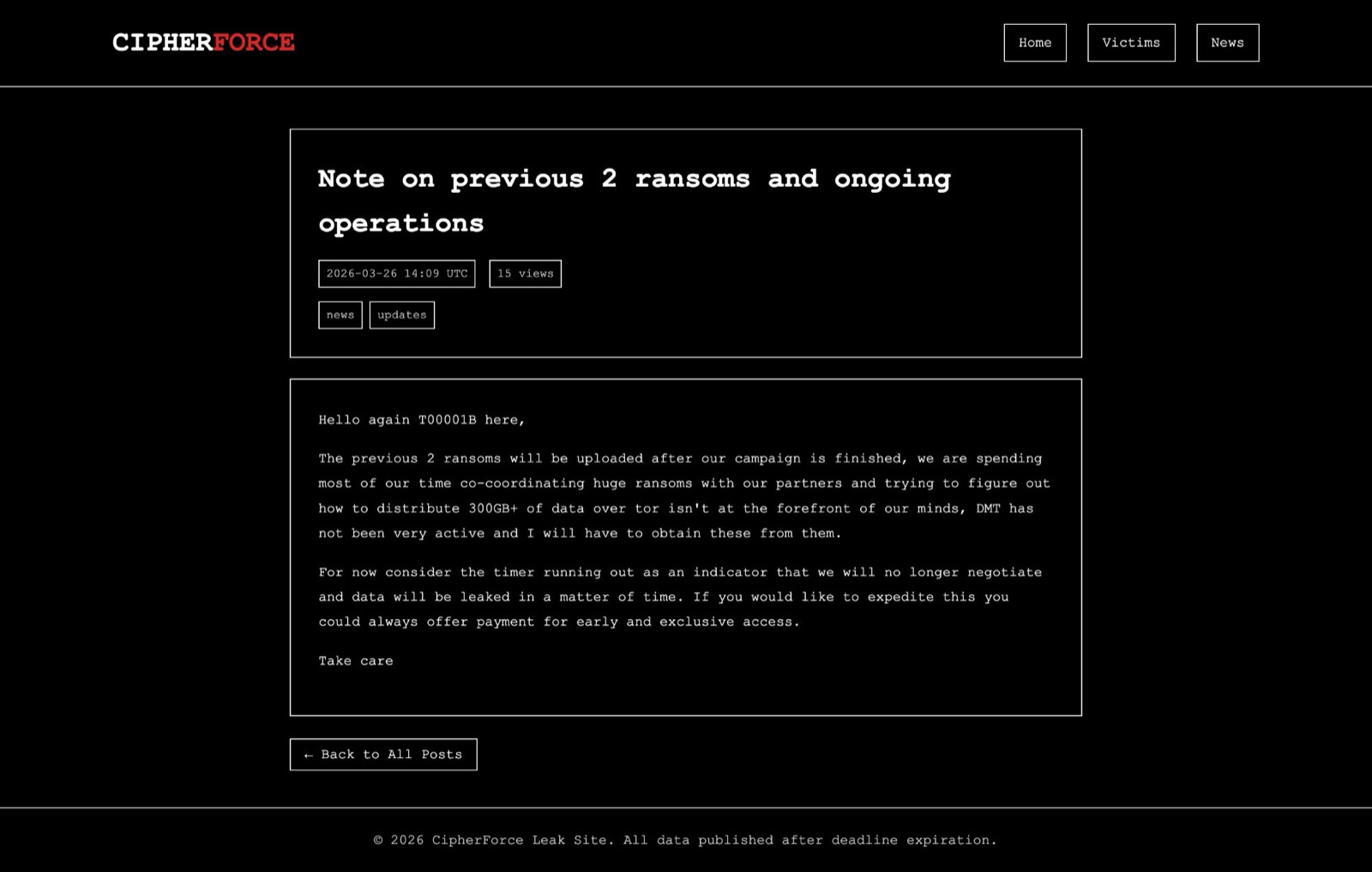

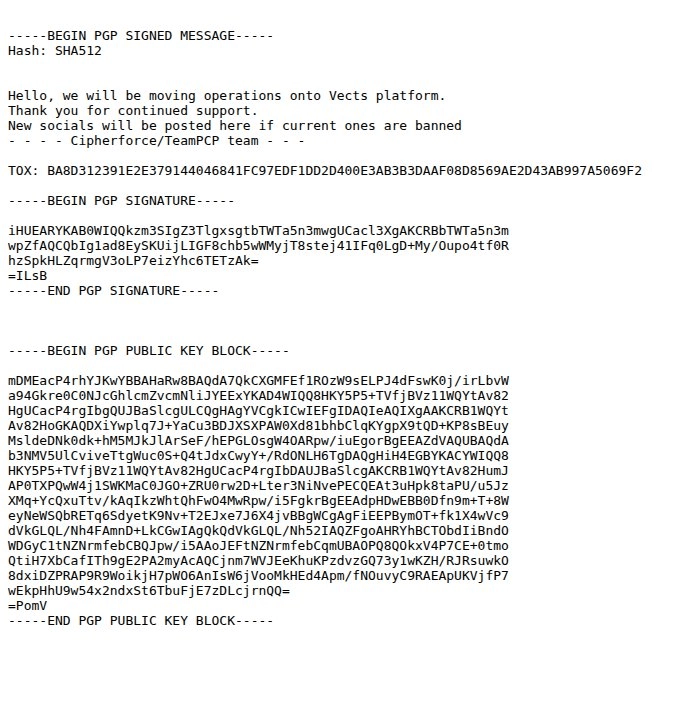

T00001B posted on the CipherForce leak site, confirming they have C++ source code for the RAT and described it as "fast and evasive, it would be a waste" — suggesting ongoing development and deliberate capability hoarding rather than full public release. CipherForce had hinted that their leak site "might become inactive in the months to come" and as it turns out, that timeline accelerated dramatically. The leak site was already dark at the time of writing. Enter the partnership with Vect.

Figure 5: T00001B's post on the CipherForce leak site (March 25, 2026) formally claiming ownership of TeamPCP and CipherForce, and publishing a PGP key for member verification.

The Ransomware Partnership Model

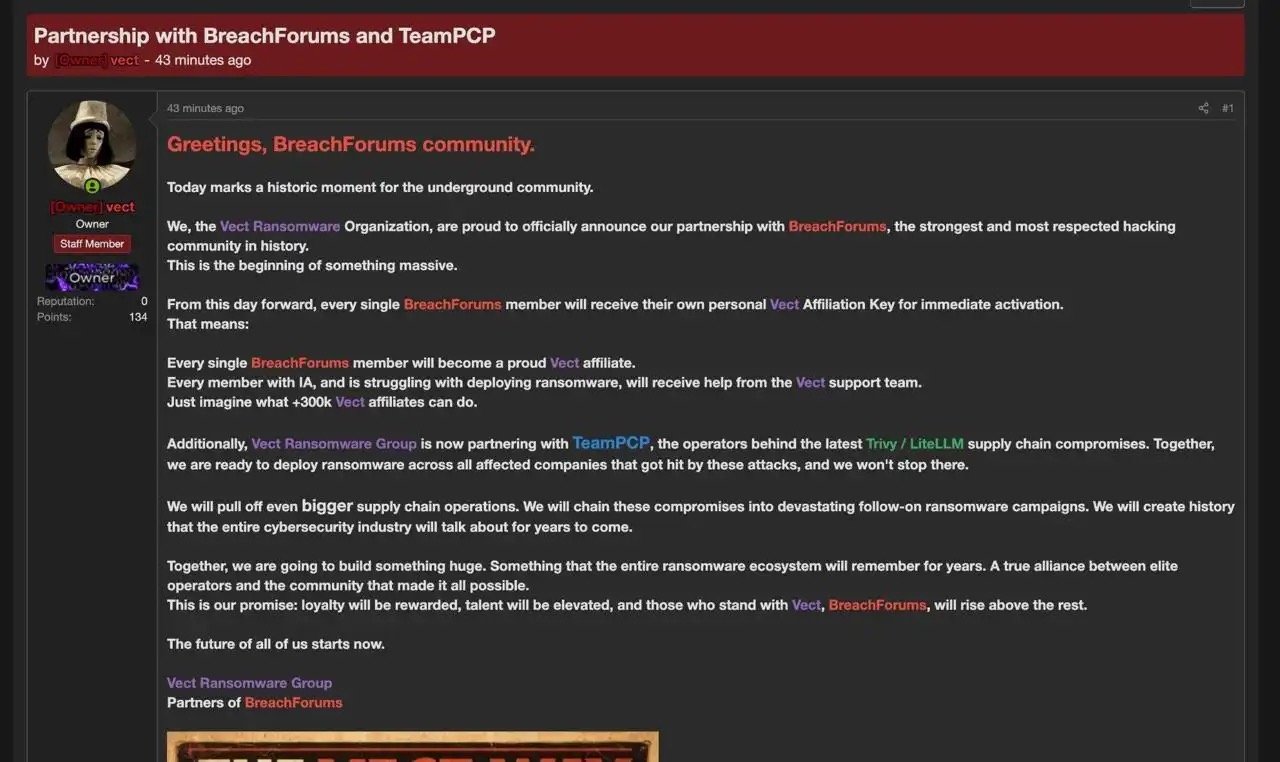

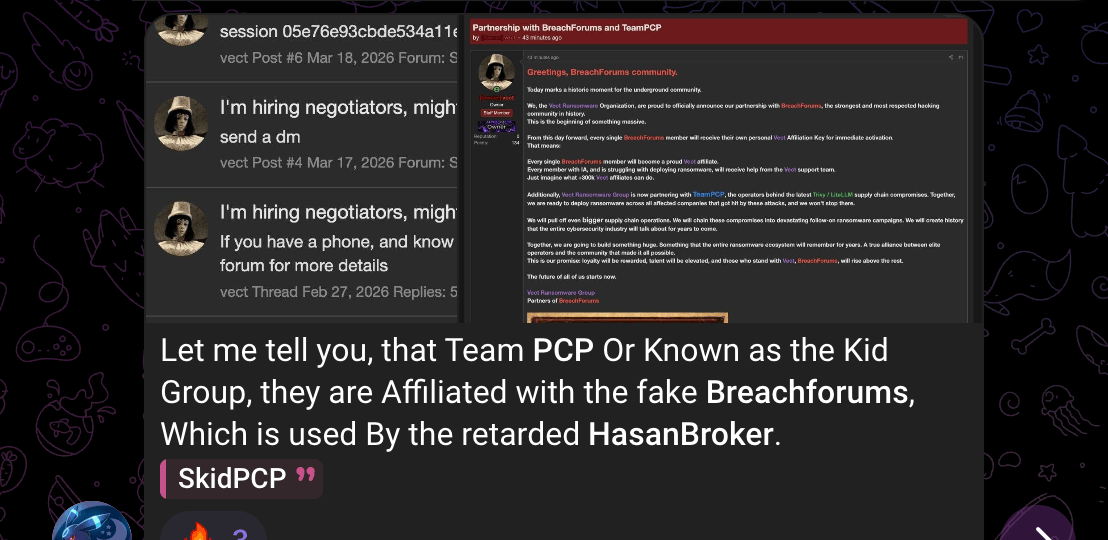

Their recent announcements of partnerships with CipherForce and Vect on BreachForums signal a deliberate division of labour: TeamPCP concentrates on supply chain initial access, ransomware partners handle extortion monetisation.

The Vect partnership announcement on BreachForums was explicit: every BreachForums member would receive a Vect affiliation key, with Vect specifically naming the Trivy and LiteLLM compromises as the source of companies they intend to ransom. The post stated intent to deploy ransomware "across all affected companies that got hit by these attacks." With a claimed 300k+ potential affiliates, the scale of threatened follow-on activity is significant.

Figure 6: Vect Ransomware Group's BreachForums announcement explicitly naming the Trivy and LiteLLM supply chain compromises as the basis for planned ransomware deployment.

As of late March 2026, the CipherForce leak site lists at least 16 confirmed victims. T00001B confirmed in a site post that the group is sitting on 300GB+ of data pending distribution, noting they are "co-ordinating huge ransoms with partners." Timer expiry on the site means data is leaked unless victims pay — and they are offering early paid access to stolen data before the timer expires.

Figure 7: CipherForce leak site update (March 26, 2026) from T00001B confirming 300GB+ of data in hand and active ransom negotiations. The post also offers early paid access to stolen data.

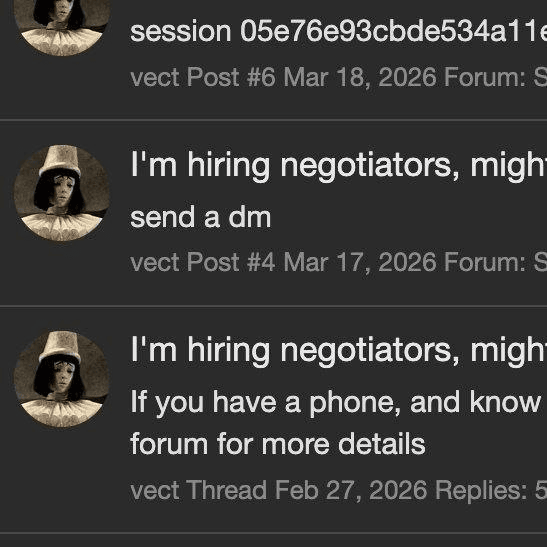

This partnership was not formed overnight. Vect had been actively recruiting negotiators and affiliates as early as February 2026, a signal that the group was deliberately scaling its operational capacity ahead of a major campaign. That hiring push, now visible in retrospect, points to a ransomware group consciously positioning itself for a step-change in volume — and TeamPCP's supply chain access gave them exactly the victim pipeline they needed.

Figure 8: Vect actively recruiting negotiators, indicating the group's increased operational presence and intent to scale extortion activity beyond their existing capacity.

Scale of Alleged Theft

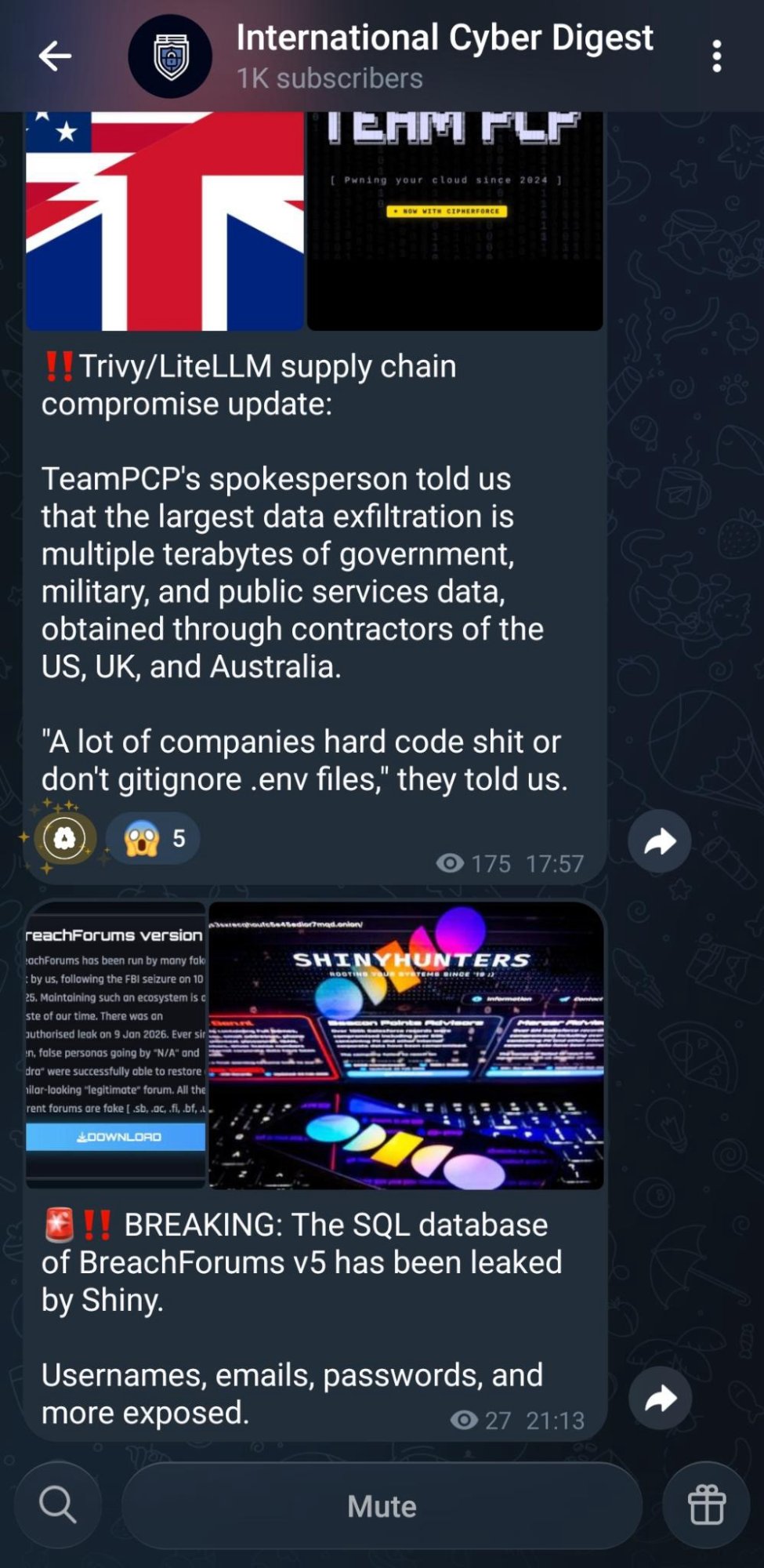

A TeamPCP spokesperson stated to International Cyber Digest that the largest single exfiltration is multiple terabytes of government, military, and public services data, obtained through contractors of the US, UK, and Australia. If accurate, this elevates the campaign from financially motivated cybercrime to a potential national security concern.

Figure 9: International Cyber Digest Telegram reporting TeamPCP spokesperson claims regarding government and military data. Also visible: breaking news of BreachForums v5 SQL database leaked by ShinyHunters — a reminder that the underground ecosystem is simultaneously expanding and consuming itself.

The public profile TeamPCP has cultivated also brought friction with other prominent groups — particularly those with more established or louder presences in the criminal underground. These inter-group conflicts are a notable feature of the current threat landscape: alliances of convenience coexist with open hostility between actors who sometimes share platforms, infrastructure, and even intelligence. For defenders, the drama is a secondary concern, but the dynamics it reveals — who is competing with TeamPCP, which groups feel threatened by their rise — carry genuine intelligence value.

Figure 10: Public conflict between TeamPCP and a rival group in the criminal underground — illustrating the volatile inter-group dynamics of the current ecosystem, where today's co-platform actor can become tomorrow's adversary.

Figure 11: Further evidence of inter-group tensions, highlighting how competitive pressure between emerging actors creates both structural instability within criminal coalitions and new intelligence opportunities for researchers tracking group relationships.

TeamPCP's own explanation for why they were so successful: "A lot of companies hard code shit or don't gitignore .env files." Blunt, but consistent with what the stealer code confirms — .env files, shell histories, and hardcoded credentials were systematically targeted across every wave.

Operational Security Evolution

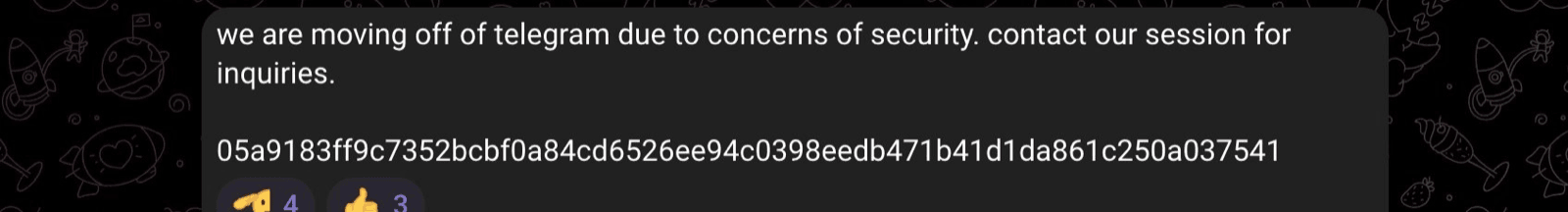

By late March, the group announced they were moving off Telegram due to security concerns, publishing a Session ID (05a9183ff9c7352bcbf0a84cd6526ee94c0398eedb471b41d1da861c250a037541) for future contact. This shift to a more privacy-preserving platform suggests increased law enforcement and researcher pressure is having an effect, or at minimum that the group is aware of it.

Figure 12: TeamPCP announcing departure from Telegram in favour of Session, citing security concerns. A pattern increasingly common among high-profile threat actors facing law enforcement and researcher scrutiny.

The CipherForce/TeamPCP PGP-signed announcement of moving to Vect's platform also demonstrates operational security awareness uncommon for groups this public. What makes this shift strategically significant is not just the OPSEC improvement — it is what the move to Vect's platform represents structurally. CipherForce is a relatively new group. Vect is a relatively new group. Neither had the established affiliate network or operational reach of more mature ransomware operations. What TeamPCP brought to the table was a ready-made victim pipeline: thousands of organisations with confirmed credential exposure, no equivalent of which Vect could have assembled independently. The motivation is straightforward — Vect gets a victim list it could not build alone; TeamPCP and CipherForce get a monetisation infrastructure without having to build or operate ransomware themselves. It is a coalition of emerging actors, each supplying what the others lack, which is arguably more dangerous than a single established group because there is no single point to disrupt.

Figure 13: PGP-signed communication from CipherForce/TeamPCP announcing operational migration to Vect's platform. The use of PGP signing for authentication indicates deliberate OPSEC capability within the group.

Note on the Axios compromise: On March 31, 2026, the widely used axios npm package (100M+ weekly downloads) was compromised via a hijacked maintainer account, dropping a cross-platform RAT. At the time of writing, Google Threat Intelligence Group attributes this to UNC1069, a North Korea-nexus actor, based on the use of the WAVESHAPER.V2 backdoor and infrastructure overlaps with past UNC1069 activity. This attribution has not been independently confirmed by all researchers — Datadog's analysis notes the TTPs do not match the recent TeamPCP campaign, while SANS notes possible continuation of the TeamPCP campaign. See Datadog's technical breakdown and SANS Internet Stormcast coverage for the respective analyses. We include it here because the timing and attack surface are directly relevant, and because the defensive recommendations are identical regardless of attribution. Organisations should treat it as part of the same threat landscape even if the actor is distinct.

The Campaign: A Condensed Timeline

Rather than reproduce the extensive technical analysis already available from CrowdStrike, Endor Labs, StepSecurity, and others (all credited below), we present the key events and what they tell us strategically.

| Date | Event | Significance |

|---|---|---|

| Feb 28, 2026 | Initial Aqua Security breach via pull_request_target pwn request | Credentials stolen; incomplete remediation leaves residual access |

| Mar 19, 2026 ~17:43 UTC | Trivy GitHub Actions tag poisoning begins; 76/77 tags compromised within hours | Credential stealer active in thousands of CI/CD pipelines worldwide |

| Mar 20, 2026 ~05:40 UTC | Aqua Security remediates trivy-action tags | ~12 hour exposure window for GitHub Actions users |

| Mar 20, 2026 | npm wave: 47+ packages across @emilgroup, @opengov, @v7 namespaces infected using stolen npm tokens | Executed in under 60 seconds; SDK-squatting targets high-privilege corporate environments |

| Mar 22, 2026 | Argon-DevOps-Mgt service account token tested (ghost branch, created and deleted same second), then used to deface all 44 aquasec-com internal repos | Long-lived PAT bridging two orgs = complete internal org compromise |

| Mar 22, 2026 | Docker Hub: malicious trivy:0.69.5 and 0.69.6 images published | Extended exposure window; Google mirror.gcr.io continued serving malicious images after Docker Hub cleanup |

| Mar 23, 2026 | Checkmarx KICS GitHub Action compromised; all 35 version tags poisoned | Same playbook, different vendor; confirms systematic approach |

| Mar 23, 2026 | CanisterWorm wiper deployed targeting Iran | Destructive capability confirmed; Kubernetes cluster destruction via privileged DaemonSets |

| Mar 24, 2026 | LiteLLM PyPI poisoned (v1.82.7, v1.82.8) | Shifts to Python ecosystem; .pth file injection means malware runs on every Python process start |

| Mar 25, 2026 | T00001B posts on CipherForce leak site formally claiming ownership of TeamPCP and CipherForce; publishes PGP key for member verification | Confirms consolidated leadership; group transitions from anonymous to openly attributed operation |

| Mar 26, 2026 | CipherForce leak site update: 300GB+ data confirmed in hand; early paid access to stolen data offered; active ransom negotiations underway | Extortion model fully activated; monetisation of supply chain access begins in earnest |

| Mar 27, 2026 | Telnyx PyPI poisoned (v4.87.1, v4.87.2) | WAV steganography introduced; Windows targeting added; RSA key matches LiteLLM attribution confirming same actor |

| Late Mar, 2026 | Vect ransomware partnership announced on BreachForums; every member offered affiliation key; Trivy and LiteLLM victims named as ransomware targets | Pivot from credential theft to ransomware-as-a-service; 300k+ potential affiliates given access to victim pipeline |

| Late Mar, 2026 | TeamPCP departs Telegram; publishes Session ID for future contact | Increased OPSEC under law enforcement and researcher pressure; shift to encrypted, metadata-resistant comms |

| Mar 31, 2026 | Axios npm compromised (v1.14.1, v0.30.4) via hijacked maintainer account — attributed to UNC1069, not TeamPCP | Separate North Korea-nexus actor; same attack surface; 100M weekly downloads; ~3 hour exposure window |

Figure 14: The LiteLLM attack write-up appearing on BreachForums behind a paywall, illustrating how TeamPCP's operations are being commercialised within the criminal underground — intelligence on the attack itself being sold to other threat actors.

What the timeline tells us: Each wave used credentials stolen in a previous wave. Trivy tokens funded the npm wave. npm tokens funded LiteLLM. LiteLLM tokens funded Telnyx. This is a credential cascade — a single initial compromise that compounds through every downstream trust relationship. The attacker did not need to find a new vulnerability for each target. They just needed to sift through their growing vault of stolen credentials and pick the next high-value publisher.

Each successive wave also showed technical evolution: monolithic bash scripts became modular loaders, inline payloads became WAV steganography, domain-based C2 became blockchain-hosted canisters on the Internet Computer Protocol (ICP) — resistant to traditional takedowns. This is not a group making beginner mistakes. The first version of the Telnyx payload had a one-character typo (Setup() instead of setup()) that killed the payload on import. They fixed it 16 minutes later. They are iterating in real time.

It would be a mistake to let the public theatre — the rodent mascot, the researcher banter, the inter-group feuds — obscure what the technical record actually shows. Whatever implosion or attrition this ecosystem eventually experiences, the supply chain campaign itself must be judged on its own merits: faster iteration than most financially motivated groups manage in years, sustained operational security under active researcher scrutiny, and a credential cascade that compounded through five major targets in under two weeks.

Figure 15: Despite the public banter, partnership announcements, and inter-group theatrics, TeamPCP's supply chain campaign demonstrated a level of technical execution and operational coordination that stands on its own — a credential cascade that compounded through five major targets in under two weeks, with each wave funded by secrets stolen in the last.

What Each Attack Had in Common

Across every wave, three things were consistently true:

1. Mutable references were the entry point. Git tags, latest Docker tags, unpinned package versions — every victim organisation was pulling code whose content could change without their knowledge or consent. If every pipeline had pinned to immutable commit SHAs, the GitHub Actions waves would have had zero impact.

2. Long-lived credentials enabled lateral movement. The Argon-DevOps-Mgt service account had a long-lived PAT, no MFA, and admin access across two organisations. One stolen token, one ghost branch test, seven hours of patience — then 44 internal repos defaced in 2 minutes. If that token had expired after 24 hours, this step of the attack fails.

3. Security tools were the target because they are the most trusted. Trivy scans for vulnerabilities. KICS scans infrastructure-as-code. These tools run in pipelines with elevated trust and broad secret access because that is what they need to do their job. Compromising them is not ironic — it is rational. They are the highest-leverage targets in the supply chain.

Recommendations: What To Actually Change

The following recommendations are ordered by impact. We have tried to be honest about what each one protects against and what it does not.

1. Pin GitHub Actions to Commit SHAs, Not Tags

This is the single highest-impact change any organisation can make right now.

# Vulnerable — mutable, silently changeable

uses: aquasecurity/[email protected]

# Safe — cryptographically tied to exact code

uses: aquasecurity/trivy-action@57a97c7e7821a5776cebc9bb87c984fa69cba8f1A commit SHA is calculated from the content of the code itself. Change one character and the SHA changes. An attacker with write access to a repository cannot repoint a SHA the way they can repoint a tag. Every organisation using tag-pinned GitHub Actions during the March 19–20 window was exposed. Every organisation using SHA-pinned Actions was not.

The overhead concern is legitimate but solvable. Use Renovate or Dependabot with pinDigests: true to automatically open pull requests when SHAs need updating. This turns SHA pinning from a maintenance burden into a review gate — which is actually what you want. Every dependency update becomes a conscious decision.

// Renovate config

{

"packageRules": [{

"matchManagers": ["github-actions"],

"pinDigests": true

}]

}Add a comment so humans know what version a SHA corresponds to:

# trivy-action v0.35.0 — only clean version

uses: aquasecurity/trivy-action@57a97c7e7821a5776cebc9bb87c984fa69cba8f1Safe versions to pin to as of publication:

| Component | Version | Clean Commit SHA |

|---|---|---|

| trivy-action | v0.35.0 | 57a97c7e7821a5776cebc9bb87c984fa69cba8f1 |

| setup-trivy | v0.2.6 | 3fb12ec12f41e471780db15c232d5dd185dcb514 |

| trivy binary | v0.69.3 | 6fb20c8edd70745d6b34bff0387b53b03c8a760a |

2. Enforce Expiry on Personal Access Tokens

The lateral movement from the Trivy compromise to the internal aquasec-com org defacement was enabled by a single long-lived PAT on the Argon-DevOps-Mgt service account. A PAT with a 24-hour or 7-day expiry makes this attack fail at the reconnaissance phase — by the time the attacker is ready to act, 7 hours later, the token is gone.

Immediate actions:

- Audit all service account PATs in your GitHub organisation for expiry dates

- Enforce maximum token lifetime at the organisation level (GitHub Enterprise supports this)

- Migrate publishing workflows to OIDC Trusted Publishers (GitHub Actions OIDC for PyPI, short-lived tokens for npm) — this eliminates long-lived tokens from the publishing pipeline entirely

- Any token with access across multiple organisations is a critical single point of failure — scope tokens to the minimum required organisation and repository

# PyPI Trusted Publisher — no long-lived token required

-name: Publish to PyPI

uses: pypa/gh-action-pypi-publish@<SHA>

with:

attestations:trueThe Telnyx compromise happened because their PyPI publishing used a long-lived API token rather than OIDC Trusted Publishers. Anyone with that token could publish from anywhere. OIDC tokens are short-lived and scoped to specific workflow runs — they cannot be stolen and reused.

3. Scan for Hardcoded Secrets and Prevent .env Files From Being Committed

TeamPCP's own explanation for their success was blunt: "A lot of companies hard code shit or don't gitignore .env files." This is the single most direct statement in the entire campaign about what enabled the credential cascade. .env files, shell histories, and inline credentials were systematically targeted across every wave — and the attack succeeded in large part because those credentials were there to steal.

The fix is a combination of prevention (stop secrets entering the repo) and detection (scan for secrets that have already entered).

Prevention — stop secrets reaching the repository:

- Add

.envand secret file patterns to your global.gitignoreand enforce a repository-level.gitignoretemplate across your organisation - Use git-secrets (AWS Labs) or gitleaks as a pre-commit hook — these run locally before a push, blocking commits that contain patterns matching API keys, tokens, and credentials:

# Install gitleaks as a pre-commit hook

gitleaks protect --staged -v- Use pre-commit to enforce hooks across your team so individuals cannot bypass them without explicitly opting out

Detection — scan for secrets already in your repositories:

For server-side push protection, the options vary significantly by cost:

- TruffleHog — free and open source. Scans git history for secrets including secrets that were committed and then deleted. Deletion does not remove a secret from history; it remains accessible in prior commits. Run it as a CI step or as a pre-receive hook:

trufflehog git https://github.com/YOUR_ORG/YOUR_REPOTruffleHog also has a GitHub Action that runs on every push and PR, giving you server-side coverage without any licensing cost.

- Gitleaks — free and open source. Can run as a full history scan in CI, not just on staged changes. A Gitleaks GitHub Action is available for zero-cost push scanning on any repository:

gitleaks detect --source . -v- Semgrep — the free tier includes secret scanning rules and runs in CI via GitHub Actions. Covers a wide range of secret patterns and is free for open source and small teams.

- GitGuardian — free for open source repositories and individual developers; paid for private org-wide scanning. Provides real-time monitoring and integrates as a GitHub App. A practical middle ground between free tooling and GHAS pricing.

- GitHub Advanced Security secret scanning with push protection — the most comprehensive server-side option for GitHub, blocking commits at the platform level. Free for all public repositories. For private repositories it requires GitHub Advanced Security, which is priced per active committer and is the most expensive option here. Worth it if you are already on GitHub Enterprise, but for most smaller organisations the combination of Gitleaks or TruffleHog Actions plus GitGuardian covers the same ground at a fraction of the cost.

Recommended free stack for most organisations: gitleaks pre-commit hook (local prevention) + TruffleHog or Gitleaks GitHub Action (CI enforcement) + a one-time full history scan with TruffleHog. This covers the same attack surface that GHAS addresses for secret scanning at zero licensing cost.

If a .env file has been committed at any point in history, treat it as compromised. Git history is public if the repository is public, and even private repositories may have been exfiltrated. Rotate every credential in that file immediately — do not rely on the fact that the commit was later removed.

The broader principle: a secrets manager is the correct long-term answer. AWS Secrets Manager, HashiCorp Vault, and Azure Key Vault remove credentials from the filesystem entirely. There is no .env file to accidentally commit if the application retrieves credentials at runtime from a managed store. This is operationally heavier to set up but eliminates an entire class of exposure.

4. Harden Your GitHub Actions Runtime

SHA pinning prevents the malicious code from loading. But defence in depth means assuming it might load anyway and catching it at the next layer.

Step Security's Harden Runner provides runtime egress blocking for GitHub Actions:

-uses: step-security/harden-runner@<SHA>

with:

egress-policy: block

allowed-endpoints:>

ghcr.io:443

github.com:443

nvd.nist.gov:443

osv.dev:443This would have blocked exfiltration to scan.aquasecurtiy.org at the network level regardless of what the malicious code attempted. The credentials never leave the runner. This is available free for open source projects.

Additional hardening:

permissions:

contents: read # not write — pipelines rarely need write accessLeast privilege on GITHUB_TOKEN prevents the fallback exfiltration channel where the malware creates a public tpcp-docs repository in your organisation using the runner's own token.

5. Treat Contractor Offboarding as a Critical Security Event

The largest alleged exfiltration from this campaign — multiple terabytes of government, military, and public services data — was obtained not by compromising those organisations directly, but through contractors operating in their supply chains. This is a structural risk that persists long after any single campaign is over.

Contractors often have the same level of access as employees — same GitHub org membership, same CI/CD pipeline permissions, same cloud credential access — but operate under fundamentally different offboarding processes. When a contractor engagement ends, their access is frequently left active while administrative procedures catch up. In the meantime, any credentials they touched remain valid.

Immediate actions:

- Treat the end of a contractor engagement as an access revocation event, not an administrative task. Revocation should happen on the last day of work, not days later

- Maintain a live inventory of which contractors have access to which systems. This is difficult in practice, but essential — you cannot offboard access you do not know exists

- Never provision contractors with permanent credentials. Where possible, use short-lived tokens (OIDC, session-scoped credentials) so that credential revocation is time-bounded regardless of whether offboarding is completed promptly

- Rotate any credentials that a departing contractor had access to, not just revoke their account. Their account revocation does not invalidate secrets they may have copied or cached locally

- Apply the same rule to third-party tools and integrations that contractors configure — API keys, webhooks, and OAuth grants created by contractors survive account deletion unless explicitly removed

The pattern is not unique to this campaign. LAPSUS$, which T00001B appears to have some community awareness of, repeatedly exploited contractor access as a lateral movement path into high-profile targets. It is a known playbook, and it keeps working because contractor lifecycle management is consistently treated as an HR process rather than a security control.

6. Use a Package Firewall for npm and PyPI Installs

SHA pinning and minimum release age are install-time controls. A package firewall is a network-layer control — it intercepts every npm install and pip install before the package reaches your environment and can block, quarantine, or alert on suspicious packages before code ever executes.

This matters because postinstall hooks (the mechanism used by the LiteLLM and Telnyx payloads) run automatically on install. By the time your scanner sees the package, the malicious code may have already run. A firewall operating before install eliminates that window.

For npm:

- Socket.dev — analyses packages for malicious behaviour, typosquatting, and supply chain risk before install. Integrates with CI and as a proxy. Blocked the npm wave packages within hours of publication.

- Private registry proxy (Artifactory, Nexus, Verdaccio) with an allow-list — only packages explicitly approved can be installed. High operational overhead, but eliminates entire categories of typosquat and dependency confusion attacks.

--ignore-scriptsflag — prevents postinstall hooks from running duringnpm install. Not a complete solution (some legitimate packages require scripts), but significantly raises the bar for this class of attack:

npm install --ignore-scriptsHarden your .npmrc configuration:

Several npm config options (full reference) can be set persistently in your project or global .npmrc to reduce supply chain risk:

# .npmrc — supply chain hardening

# Pin exact versions in package.json rather than semver ranges (^ or ~)

# Prevents silent version drift on reinstall

save-exact=true

# Fail npm install if any dependency has a high or critical vulnerability

audit=true

audit-level=high

# Block all git-sourced dependencies (e.g. foo: "github:user/repo")

# Eliminates an entire class of dependency confusion and source-swapping attacks

allow-git=none

# Enforce SSL certificate validation for registry connections

# Should always be true; never disable this in production pipelines

strict-ssl=true

# Minimum release age in days — blocks packages published fewer than N days ago

# Directly mitigates the LiteLLM/Telnyx class of attack (malicious version live for hours)

min-release-age=7

# For package publishers: link published packages to the CI/CD workflow that built them

# Creates a verifiable provenance attestation on the npm registry

provenance=truesave-exact=true is worth calling out specifically: npm's default behaviour saves dependencies with a ^ prefix (e.g. ^1.2.3), meaning npm install on a fresh clone will silently pull the latest compatible minor version — not the version you tested with. Setting save-exact=true makes every npm install --save write a pinned version, so package.json and package-lock.json agree. Combined with min-release-age, this significantly narrows the window in which a malicious version could be automatically pulled.

allow-git=none is underused: git-sourced dependencies ("foo": "github:org/repo") are not subject to the same registry controls, signing, or provenance checks as versioned packages. Blocking them by default and allowing exceptions explicitly is a low-cost policy with meaningful supply chain value.

For Python/PyPI:

- pip-audit — scans installed packages and

requirements.txtagainst OSV and PyPA advisory databases. Free, fast, and composable into any pipeline:

pip-audit -r requirements.txt- Artifactory or Nexus as a PyPI proxy — routes all pip installs through a controlled mirror. Packages can be blocked, scanned, or quarantined before they reach developers or CI runners.

- pypi-parser / private index with

--index-url— for high-security environments, point pip at an internal mirror that only includes vetted packages and blocks direct PyPI access entirely:

pip install --index-url https://your-internal-pypi.example.com/simple/ -r requirements.txtThe LiteLLM and Telnyx attacks succeeded partly because developers and pipelines were pulling directly from public PyPI with no intermediary layer. A proxy or firewall would have provided an additional detection or blocking opportunity even if the malicious versions were not caught before publication.

A note on cost. Commercial npm firewall solutions — particularly Socket.dev's team and enterprise tiers, and self-hosted proxies like Artifactory or Nexus — carry meaningful licensing and infrastructure costs that are not realistic for every organisation. If a full firewall is out of reach, minimumReleaseAge is the most practical fallback: it is free, requires a single line of configuration, and directly targets the class of attack used in this campaign. Malicious versions of LiteLLM and Telnyx were active for hours before detection — a 7-day release age window would have blocked automatic installation of both. It is not a substitute for a firewall, but it is a significant risk reduction at zero cost.

7. Consider Running Two Vulnerability Scanners From Different Vendors

The Trivy incident exposed a single point of failure that many organisations did not know they had. Their security scanner was their vulnerability.

Running OSV-Scanner (Google) alongside Trivy (Aqua Security) provides meaningful supply chain diversification:

- Completely separate infrastructure and credential stores

- An attacker compromising Aqua Security has zero access to Google's publishing pipeline

- Both output SARIF format and feed into the same GitHub Security tab

- Practical overhead is minimal — perhaps 20–30 seconds added per pipeline run

- OSV-Scanner is free and open source: osv.dev

# Example: dual scanner workflow step

-name: Run OSV-Scanner

uses: google/osv-scanner-action@<SHA-PIN>

-name: Run Trivy

uses: aquasecurity/trivy-action@57a97c7e7821a5776cebc9bb87c984fa69cba8f1This is not a silver bullet. If both scanners are referenced by mutable tags, you have doubled your attack surface rather than halving your risk. SHA pin both. But with SHA pinning in place, vendor diversification means a compromise of one vendor's infrastructure does not blind your pipeline entirely.

Alternatives to Trivy worth evaluating: Grype (Anchore) is the most direct like-for-like replacement and comes from a completely separate supply chain.

A note on vulnerability management overhead. Whether to run dual scanners is ultimately case-by-case dependent. For application owners, vulnerability management is already a cumbersome programme — managing findings from two scanners means deduplicating results, reconciling differing severity ratings, and maintaining two separate tool integrations, all of which pulls time away from mission-critical work. That overhead is real and should not be dismissed. The counterargument is equally concrete: two scanners from separate supply chains, each SHA-pinned to an immutable commit, means a compromise of one vendor's infrastructure cannot blind your pipeline entirely — you still have a second, independent layer catching what the first cannot. Whether that tradeoff is worth it depends on your team's capacity and risk appetite. For organisations with lean security functions, starting with a single well-configured, SHA-pinned scanner and investing the saved overhead into robust detection and response is a reasonable alternative.

8. Treat Docker Wrappers and Local Tool Installs as a Separate Risk Category

Much of the discussion about this incident focused on GitHub Actions pipelines. But Trivy is also widely installed:

- Directly via apt/rpm/brew on developer machines

- As a Docker wrapper for local scanning

- As a persistent scanning service in on-premise environments

For these deployments, the threat model is fundamentally different. GitHub-hosted runners are ephemeral — they are destroyed after each job, so the sysmon.py persistence implant dies with them. Self-hosted runners and local installs are persistent machines. The RAT survives. It polls the C2 every 50 minutes. It downloads new payloads silently.

For example, if you run Trivy locally, via Docker, or on a persistent self-hosted runner, and you pulled any version between v0.69.4 and v0.69.6 during the exposure window:

Check for persistence artifacts:

# Linux

ls -la ~/.config/sysmon.py

ls -la /tmp/pglog

ls -la ~/.config/audiomon/audiomon.py # LiteLLM/Telnyx wave

ls -la ~/.config/systemd/user/audiomon.service

# Windows

dir "%APPDATA%\Microsoft\Windows\Start Menu\Programs\Startup\msbuild.exe"Check for attacker pods in Kubernetes:

kubectl get pods -n kube-system | grep node-setupDo not attempt to clean in place. Rebuild from a known-good state and rotate all credentials.

9. For Vibe Coders and AI-Assisted Development: Isolate Your Environment

The rise of AI coding tools — Cursor, GitHub Copilot, and others — has accelerated package installation rates dramatically. Developers are installing packages they do not fully understand, often at AI suggestion, without reviewing what is being added. This is a perfect storm for supply chain attacks.

If you use AI-assisted development tools:

- Use Docker containers as your development environment. Keep your local machine credential store separate from your coding environment. If a malicious package executes in a container, your AWS keys, SSH keys, and cloud credentials on the host are not accessible.

- Never store credentials inside your development container. Mount them read-only from outside, or use a secrets manager.

- Avoid

latesttags in your pipelines. Usingtag: latest— whether for Docker images, npm packages, or other dependencies — means you are silently pulling whatever the publisher most recently pushed. This is the same mutable-reference problem that made the Trivy and LiteLLM attacks so effective. Iflatestis unavoidable in your workflow, pair it with a minimum release age policy so you are never the first to run freshly published code. Socket.dev'sminimumReleaseAgeconfiguration does exactly this — blocking packages published within the last N days from installing automatically. npm has now introduced this as a first-class feature; see Socket.dev's write-up for configuration details.

// .npmrc or Socket config

minimumReleaseAge: 7Note: this is a risk reduction measure, not a complete solution — long-term access tokens mean attackers can sit on stolen credentials for weeks before acting, as this campaign demonstrated.

- Audit what AI tools are adding to your

package.json. The LiteLLM and Telnyx payloads injected themselves so seamlessly that the real SDK continued to function normally. Everything looked fine. You would not know unless you checked. - Keep credentials out of your shell environment.

AWS_ACCESS_KEY_IDexported in.zshrcis available to every process you run — including postinstall hooks. Usedirenvto scope credentials to specific project directories, or inject them via a CLI secrets manager only when needed. The.pthmechanism in LiteLLM and Telnyx targeted exactly what was sitting in the environment at Python startup. - Watch for unexpected outbound connections from your dev machine. The LiteLLM and Telnyx payloads phoned home on a ~50-minute polling loop. On macOS, Lulu (free) or Little Snitch will surface a Python or Node process making connections it has no business making. On Linux,

opensnitchdoes the same. Neither is foolproof, but an alert beats silence.

10. Adopt a Healthy Scepticism of GitHub Repositories

The trust model of the open source ecosystem is broken in a specific way: we treat popular as synonymous with safe. Trivy had millions of users. axios had 100 million weekly downloads. Neither of those numbers tells you anything about the security of the maintainer's credentials.

Practical checks before adding any GitHub Action or open source dependency:

- Who maintains it? A single maintainer with no MFA and a long-lived PAT is a single point of failure regardless of popularity

- Is it SHA pinned in your workflow? If not, you are implicitly trusting whoever controls the repo forever

- Does it have immutable releases? Check whether releases are signed and whether tags are protected

- Check the licence and org. A recently created GitHub org with a handful of repos and a popular-sounding name is a red flag

- Use OSV.dev's API to check a package before installing:

curl -d '{"version": "0.69.4", "package": {"name": "trivy", "ecosystem": "Go"}}' \

https://api.osv.dev/v1/queryOSV.dev aggregates vulnerability data from GitHub Security Advisories, PyPA, RustSec, and others into a single queryable database. It is free and provides a machine-readable API suitable for integrating into your pipeline pre-checks.

- Check for provenance attestations. npm now supports build provenance — packages published via GitHub Actions can carry a verifiable attestation linking the package to a specific repo commit and workflow run. Run

npm audit signaturesto check. PyPI supports sigstore attestations under PEP 740 for packages that have opted in. Neither LiteLLM nor Telnyx had attestations at the time of their compromise. This isn't a silver bullet — an attacker with maintainer credentials can generate a valid attestation — but a mature package that has never had one, or one that suddenly acquires its first attestation, is worth a second look.

11. Audit Your Commit History for Tampering and Backdated Injections

Not all supply chain attacks announce themselves with a fresh commit. Two distinct techniques — one used by TeamPCP, one documented in the XCTDH campaign — show how attackers are actively subverting the assumption that commit history is a reliable audit trail.

Technique 1 — Ghost commits (TeamPCP)

The Argon-DevOps-Mgt compromise was preceded by a reconnaissance commit that was created and deleted within the same second — a ghost branch test to probe write access before the full attack. This is a broader pattern: malicious commits are made, executed in pipelines, and immediately deleted or reverted. The pipeline runs. The secrets are exfiltrated. The evidence is gone. By the time anyone looks at the repository, the commit history appears clean.

Reconstruct in raw terms:

# For each tag (e.g. 0.33.0):

# Step 1 & 3: Get original tag's metadata

ORIG_AUTHOR=$(git log -1 refs/tags/0.33.0 --format="%an")

ORIG_EMAIL=$(git log -1 refs/tags/0.33.0 --format="%ae")

ORIG_DATE=$(git log -1 refs/tags/0.33.0 --format="%aI")

ORIG_MSG=$(git log -1 refs/tags/0.33.0 --format="%B")

# Step 2: Start from master HEAD, swap only entrypoint.sh

git checkout master

cp malicious_entrypoint.sh entrypoint.sh

git add entrypoint.sh

# Step 4 & 5: Commit with spoofed metadata, parent = master HEAD

git config user.name "$ORIG_AUTHOR"

git config user.email "$ORIG_EMAIL"

GIT_AUTHOR_DATE="$ORIG_DATE" \

GIT_COMMITTER_DATE="$ORIG_DATE" \

git commit --no-verify -m "$ORIG_MSG"

# Step 6: Force-push tag to malicious commit

git tag -f 0.33.0

git push --force origin refs/tags/0.33.0This is why pipeline logs matter as much as repository history. A clean repository does not mean nothing happened — it may mean something happened and was cleaned up. Commit history is not a substitute for runtime logs and egress monitoring.

Technique 2 — Backdated commit injection (XCTDH)

A more sophisticated evolution of this technique involves manipulating Git's commit timestamp metadata to backdate a malicious commit to months or years in the past. Git's GIT_AUTHOR_DATE and GIT_COMMITTER_DATE environment variables can be set to any arbitrary value before a push, meaning a commit can be made to appear as if it was authored by a previous contributor 2–3 years ago. The malicious code sits in history, attributed to a legitimate former developer, with a timestamp that predates the attacker's access.

Raw file commands:

@echo off

for /f "delims=" %%A in ('cmd /c "git log -1 --date=format-local:%%Y-%%m-%%d --format=%%cd"') do set LAST_COMMIT_DATE=%%A

for /f "delims=" %%A in ('cmd /c "git log -1 --date=format-local:%%H:%%M:%%S --format=%%cd"') do set LAST_COMMIT_TIME=%%A

for /f "delims=" %%A in ('cmd /c "git log -1 --format=%%s"') do set LAST_COMMIT_TEXT=%%A

for /f "delims=" %%A in ('cmd /c "git log -1 --format=%%an"') do set USER_NAME=%%A

for /f "delims=" %%A in ('cmd /c "git log -1 --format=%%ae"') do set USER_EMAIL=%%A

for /f "delims=" %%A in ('git rev-parse --abbrev-ref HEAD') do set CURRENT_BRANCH=%%A

echo %LAST_COMMIT_DATE% %LAST_COMMIT_TIME%

echo %LAST_COMMIT_TEXT%

echo %USER_NAME% (%USER_EMAIL%)

echo Branch: %CURRENT_BRANCH%

set CURRENT_DATE=%date%

set CURRENT_TIME=%time%

date %LAST_COMMIT_DATE%

time %LAST_COMMIT_TIME%

echo Date temporarily changed to %LAST_COMMIT_DATE% %LAST_COMMIT_TIME%

git config --local user.name %USER_NAME%

git config --local user.email %USER_EMAIL%

git add .

git commit --amend -m "%LAST_COMMIT_TEXT%" --no-verify

date %CURRENT_DATE%

time %CURRENT_TIME%

echo Date restored to %CURRENT_DATE% %CURRENT_TIME% and complete amend last commit!

git push -uf origin %CURRENT_BRANCH% --no-verify

@echo onThis has two compounding effects: it defeats any alerting based on "recent commits", and it makes forensic attribution significantly harder — the suspicious change appears to have been made by someone who may no longer be on the team, long before the current incident.

Ransom-ISAC has documented the XCTDH campaign's evolution of this technique across a four-part series:

What to look for:

- Commits where

author dateandcommitter datediverge significantly — a large gap between the two can indicate post-hoc timestamp manipulation - Commits attributed to former contributors (developers who left the project) that appear in the middle of recent change sets when viewing

git log --follow - Changes to sensitive files (CI/CD configs, dependency manifests, workflow files) that do not appear in recent activity but whose content is current

Defensive measures:

- Enable branch protection rules with required pull request reviews — direct pushes to

mainwithout review create an unmonitored channel for these injections - Use Sigstore/Gitsign or GPG commit signing and enforce signed commits at the branch protection level. A backdated commit can spoof a timestamp, but it cannot produce a valid signature from a key the attacker does not hold:

# Enforce signed commits via branch protection

git config --global commit.gpgsign true- Consider Rekor (Sigstore's transparency log) for CI pipelines — it creates a tamper-evident, append-only log of signing events, making post-hoc injection of signed commits detectable

- Run periodic

git log --pretty=format:"%H %ai %ci %an %s" | awk '$2 != $3'to surface commits where author and committer timestamps differ significantly - Review your CI workflow: pipelines should run on pinned SHAs, not branch heads — this means a backdated commit merged to main still needs to be explicitly referenced before it executes in CI

The combination of ghost commits and backdated injections represents a maturation of supply chain tradecraft. The goal is the same in both cases: make the repository look clean while the malicious code executes. The defence is the same: do not trust repository state alone — corroborate it with signed commits, runtime logs, and egress monitoring.

Determining If You Were Exposed

Each wave of the TeamPCP campaign had a distinct exposure window and attack surface. For each section below, check whether you used the affected component in the stated window — if so, treat all credentials accessible to that environment as compromised and rotate immediately. For full technical detection detail and IOCs, follow the references provided; these are the authoritative sources.

Trivy GitHub Actions (Mar 19 17:43 UTC – Mar 20 05:40 UTC)

If you used aquasecurity/trivy-action by tag (not SHA) and any pipeline ran during this window, assume credential exposure. All secrets accessible to that workflow run — GitHub tokens, cloud credentials, publishing keys — should be treated as compromised.

Aqua Security official disclosure (GHSA-69fq-xp46-6x23) · CrowdStrike: From Scanner to Stealer · StepSecurity: 10 Layers Deep · Endor Labs analysis · ramimac.me/teampcp

Trivy Docker Images (Mar 22 onwards)

Malicious images trivy:0.69.4, 0.69.5, and 0.69.6 were published to Docker Hub. Google's mirror.gcr.io continued serving malicious images after Docker Hub cleanup. If you pulled any of these versions — locally, in CI, or via a persistent scanning service — treat the host as potentially compromised. GitHub-hosted runners are ephemeral; self-hosted runners, local installs, and Docker-based scanning services are persistent and require closer inspection.

Aqua Security official disclosure (GHSA-69fq-xp46-6x23) · Socket.dev: Docker images compromised · ramimac.me/teampcp

Checkmarx KICS GitHub Action (Mar 23 onwards)

All 35 version tags of the Checkmarx KICS GitHub Action were poisoned using the same playbook as Trivy. If any pipeline ran the KICS action by tag after March 23, treat all secrets accessible to that workflow as compromised.

StepSecurity: KICS action compromised · Wiz: TeamPCP Attack on KICS · Checkmarx Security Update · ramimac.me/teampcp

npm Packages — @emilgroup, @opengov, @v7 namespaces (Mar 20)

47+ packages across these namespaces were infected in under 60 seconds on March 20. If any of your projects installed packages from these namespaces on or around that date, treat any secrets accessible during the install — shell environment variables, .env files in the working directory, cloud credential files — as compromised.

Socket.dev: npm wave analysis · ramimac.me/teampcp

LiteLLM PyPI (Mar 24 — v1.82.7, v1.82.8)

The malicious versions injected a .pth file, meaning the payload ran on every Python process start for as long as the poisoned version was installed — not just at install time. If you ran either version, treat the machine or container as compromised. Exposure is not limited to LiteLLM-specific code paths — any Python process on the affected host may have triggered the payload. Safe version: v1.82.9 or later.

Endor Labs analysis · Datadog: LiteLLM compromised on PyPI · ramimac.me/teampcp

Telnyx PyPI (Mar 27 — v4.87.1, v4.87.2)

Same .pth persistence mechanism as LiteLLM, with the addition of WAV steganography for payload delivery and Windows targeting. The RSA key embedded in the Telnyx payload matches LiteLLM, confirming the same actor and C2 infrastructure. If both were installed in the same environment, treat it as a single combined exposure. Safe version: v4.87.3 or later.

Endor Labs: Telnyx analysis · Endor Labs: GHSA-955r-262c-33jc · ramimac.me/teampcp

Axios npm (Mar 31 — v1.14.1, v0.30.4) — ~3 hour window

Attributed at time of writing to UNC1069 (North Korea-nexus), not TeamPCP. A cross-platform RAT was dropped via a hijacked maintainer account. The exposure window was approximately 3 hours (03:00–06:00 UTC). If you installed either version in that window, treat the machine or container as potentially compromised. Safe version: v1.14.2 or later.

Datadog technical breakdown · StepSecurity write-up · GitHub Advisory GHSA-fw8c-xr5c-95f9 · Socket.dev analysis

Persistence Artefacts — All Waves

For any persistent machine (self-hosted runner, developer workstation, Docker-based scanning service) that may have been exposed across any of the above waves, known artefact names include sysmon.py, pglog, audiomon.py, and audiomon.service on Linux; msbuild.exe in the Windows startup folder; and node-setup pods in Kubernetes. For full artefact lists and IOCs, refer to the vendor analyses linked in each section above and the comprehensive reference at ramimac.me/teampcp.

Do not attempt to clean in place. Rebuild from a known-good state.

If Compromised — Rotate Everything

Regardless of which wave affected you, rotate all credentials accessible from the compromised environment:

- GitHub PATs and machine tokens

- AWS IAM keys and OIDC roles

- Docker Hub and GHCR credentials

- SSH keys present on the runner or machine

- Kubernetes tokens and kubeconfig

- Any

.envvalues accessible from CI or the affected host - PyPI and npm publishing tokens

- Any cloud or SaaS credentials stored in shell environment variables or credential files

Full IOC Reference

The indicators below cover the key infrastructure, file artefacts, and compromised package versions identified across the TeamPCP supply chain campaign and the Axios/UNC1069 compromise. This is not exhaustive — for a comprehensive and continuously updated IOC set for the TeamPCP campaign, ramimac maintains an excellent reference at ramimac.me/teampcp. For the Axios compromise (attributed at time of writing to UNC1069), additional IOCs and technical analysis are available from StepSecurity's write-up and the npm security advisory (GHSA-fw8c-xr5c-95f9).

Closing Thoughts

The open source ecosystem runs on trust, and that trust is being systematically weaponised. Maintainers of widely used projects are often individuals or small teams with limited security resources, long-lived credentials, and no enterprise security backing. They are the weakest link in supply chains that extend into the most privileged environments in the world.

TeamPCP did not discover a new class of vulnerability. They operationalised something the security community has known about for years. The techniques — mutable tags, long-lived tokens, postinstall hooks, trusted third-party actions — are not novel. What is new is the scale, speed, and coordination with which they were executed, and the credential cascade model that turns one compromised maintainer into access across dozens of organisations.

The axios compromise, arriving days later and attributed at the time of writing to a North Korea-nexus actor (UNC1069) — though this attribution remains unconfirmed across all researchers — suggests we are entering a period where supply chain attacks are no longer exceptional events. Whether from financially motivated groups like TeamPCP or nation-state adjacent actors, they are becoming a standard tool in the threat actor toolkit. The identity of the attacker matters less than the fact that the same open surface was exploited twice in the same week by different groups.

The defensive answer is not to stop using open source. It is to use it with the same discipline we apply to any other trust relationship: verify, pin, scope, rotate, and monitor.

These changes are not technically complex. They are operationally inconvenient. That inconvenience is now the cost of not getting compromised.

Ransom-ISAC publishes threat intelligence to help organisations understand and respond to ransomware and related threats. If you have indicators or intelligence related to TeamPCP or related campaigns, please reach out.

References: CrowdStrike (initial detection), Aqua Security (official disclosure GHSA-69fq-xp46-6x23), Endor Labs (LiteLLM/Telnyx analysis), StepSecurity (KICS/Axios analysis, 10 Layers Deep), OpenSourceMalware.com (aquasec-com org defacement), Aikido Security (CanisterWorm), Socket.dev (npm wave), Flare (TeamPCP profiling), Maltrail, ramimac, AdnaneKhan